Brás, Carolina; Montenegro, Helena; Cai, Leon Y.; Corbetta, Valentina; Huo, Yuankai; Silva, Wilson; Cardoso, Jaime S.; Landman, Bennett A.; Išgum, Ivana. “Explainable AI for medical image analysis.” Trustworthy Ai in Medical Imaging, 2024, pp. 347-366, https://doi.org/10.1016/B978-0-44-323761-4.00028-6.

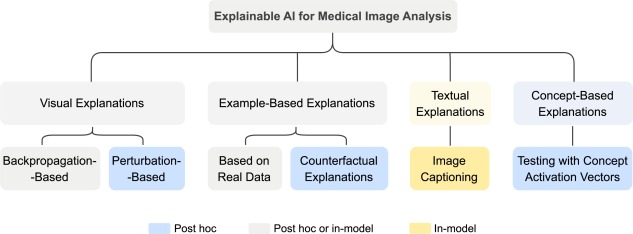

As AI-driven solutions are becoming more common in medical imaging, there’s a growing need to make these AI models more understandable and trustworthy. This chapter focuses on different ways to explain how AI models work in medical image analysis, which is essential for building trust in these systems. We look at four main types of explanations: visual, example-based, textual, and concept-based.

For visual explanations, we explore methods like backpropagation and perturbation, which help us understand how the model uses different parts of the image to make decisions. For example-based explanations, we focus on techniques that compare images to prototypes, measure distances between examples, retrieve similar images, or provide counterfactual explanations (showing how changing an image would change the AI’s decision). Lastly, for textual and concept-based explanations, we look at how to generate image captions and use concept activation vectors to interpret the AI’s understanding of different concepts.

The goal of this chapter is to explain how each method works, their strengths and weaknesses, and how to interpret their explanations in the context of medical image analysis.

Figure 16.1. Overview of explainable AI approaches used in medical image analysis and corresponding classification as in-model or post-hoc explanations.