Zhang, Enzhi; Lyngaas, Isaac; Chen, Peng; Wang, Xiao; Igarashi, Jun; Huo, Yuankai; Munetomo, Masaharu; Wahib, Mohamed. “Adaptive Patching for High-resolution Image Segmentation with Transformers.” International Conference for High Performance Computing, Networking, Storage and Analysis, SC,

2024, https://doi.org/10.1109/SC41406.2024.00082.

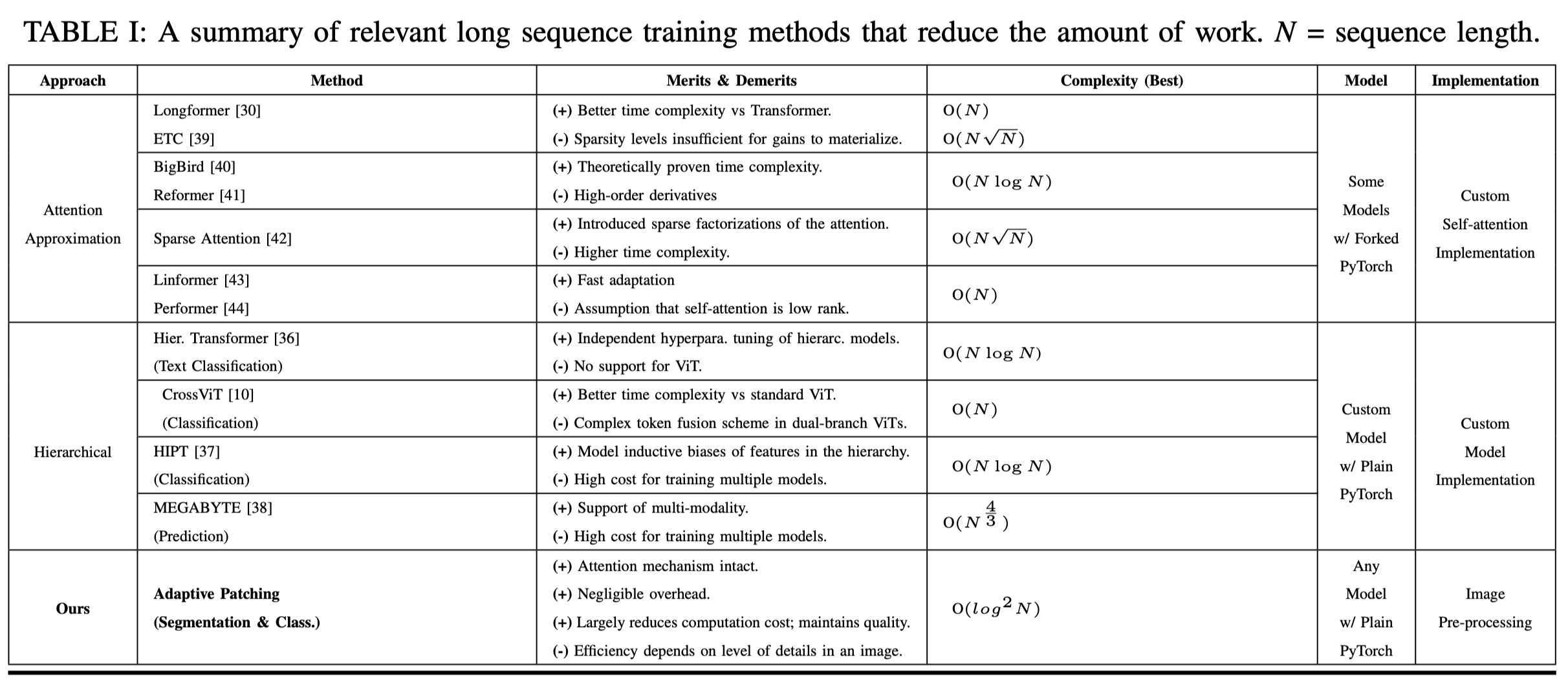

Attention-based models are becoming increasingly popular for tasks like image analysis and segmentation, but working with high-resolution images, like those used in pathology, presents challenges. The typical method involves breaking the images into small patches and processing them in sequence, but this becomes very resource-intensive for high-resolution images, making it difficult to use these models efficiently. One solution has been to either use complex multi-resolution models or simplified attention methods, but these come with their own challenges.

In this study, we drew inspiration from a technique used in high-performance computing called Adaptive Mesh Refinement (AMR). Instead of dividing the entire image into patches upfront, we dynamically adjust the patches based on the details in the image, allowing us to significantly reduce the number of patches needed for processing. This approach adds minimal extra work and can be easily used with any attention-based model. Our method not only improved the quality of image segmentation compared to existing models, but it also increased processing speed by an average of 6.9 times, even for images with very high resolutions (up to 64K²), while running on up to 2,048 GPUs.